🎧 Listen to the Podcast Version

Prefer listening over reading? This is a podcast-style discussion generated from the article — not a text-to-speech narration.

⏱ ~ 20 min listen • Best for multitasking

TL;DR

- The EU AI Act has the strictest rules for banned uses, high-risk systems, and large GPAI models.

- Banned uses include manipulation, emotion recognition in workplaces or schools, facial scraping, and certain biometric identification.

- High-risk systems need formal compliance controls: risk management, logging, human oversight, and a quality management system.

- GPAI models above the systemic-risk threshold face extra safety, transparency, and documentation obligations.

- Limited-risk AI still needs clear user disclosure and labeling for AI-generated or deepfake content.

- Non-compliance can be extremely expensive, with fines up to 35 million euros or 7% of global turnover.

- The safest strategy is to classify every AI system early, remove any prohibited features, and build compliance into the product roadmap now.

Why this matters now

Many leaders still think the EU AI Act is a European problem for European companies. That view is too narrow. The Act applies by impact, not just by address, which means non-EU companies can be inside its scope the moment their AI affects people in the EU. For mid-sized tech firms, that matters because compliance gaps can sit inside products, SaaS tools, vendor workflows, and HR systems that look ordinary on the surface.

The practical issue is simple. EU AI compliance is becoming a product design problem, an engineering problem, and a procurement problem at the same time. That is why CEOs need a working model, not just a legal summary. The goal is to know which systems are exposed, which ones are banned, which ones need heavy controls, and which vendor relationships could create liability you never intended to own.

The AI Act uses a risk-based structure. It regulates what the AI does and how it affects people, not just the model itself.

What the Act covers

The EU AI Act defines a four-tier framework. At the top is unacceptable risk, which is banned. Next is high-risk, which carries the heaviest operational burden. Then comes limited risk, which mainly requires transparency. Finally, minimal risk has no special AI Act obligations.

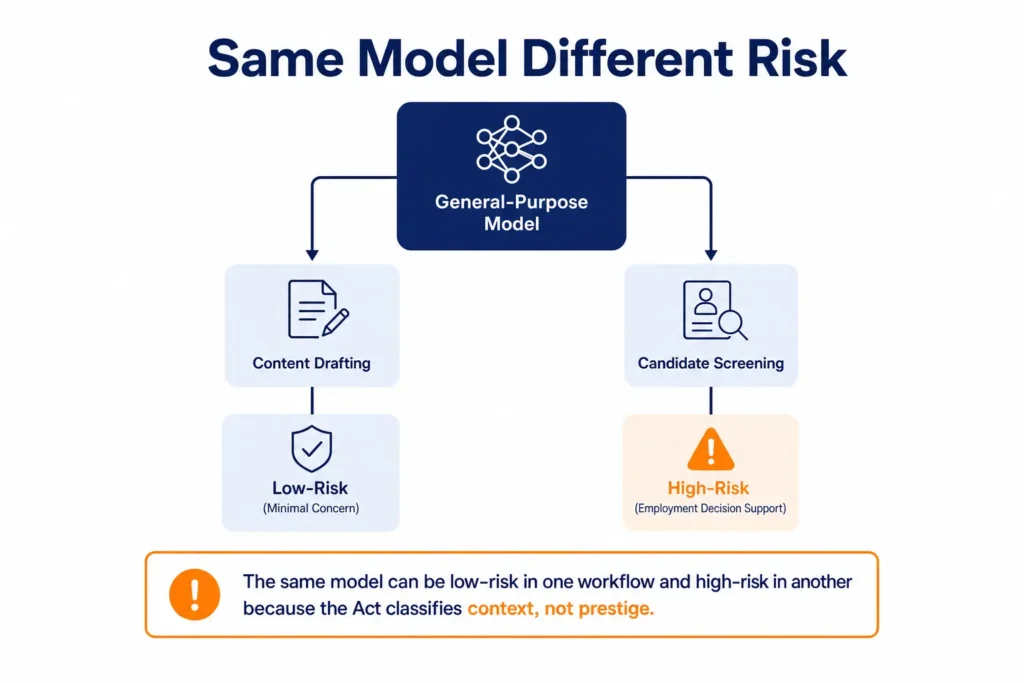

This structure matters because the same model can fall into different categories depending on use case. A general-purpose model used for drafting emails may be low concern. The same model used to screen candidates or influence employee decisions can trigger high-risk obligations. That is why the Act forces companies to classify systems by context, not by technical prestige.

Risk tiers at a glance

| Tier | What it means | Typical examples | Main duty | Penalty exposure |

|---|---|---|---|---|

| Unacceptable risk | Banned outright | Workplace emotion recognition, untargeted facial scraping, social scoring | Stop use or redesign | Up to €35 million or 7% of global turnover |

| High-risk | Heavy controls required | Hiring, credit, biometrics, critical infrastructure, medical-related AI | QMS, logging, oversight, testing | Up to €15 million or 3% of global turnover |

| Limited risk | Transparency required | Chatbots, deepfakes, AI-generated public content | Disclose AI use and label content | Lower-tier fines can still apply |

| Minimal risk | No special AI Act duties | Spam filters, basic optimization, video game AI | Normal governance only | No specific AI Act fines if classification is correct |

Where CEOs get surprised

The biggest mistake is assuming the law only hits “AI products.” It does not. The Act can apply to internal tools, third-party SaaS, and systems used by HR or operations teams. If a company uses AI to rank candidates, monitor workers, infer emotion, or profile people in ways that affect jobs or access, it can fall into the high-risk or prohibited zone.

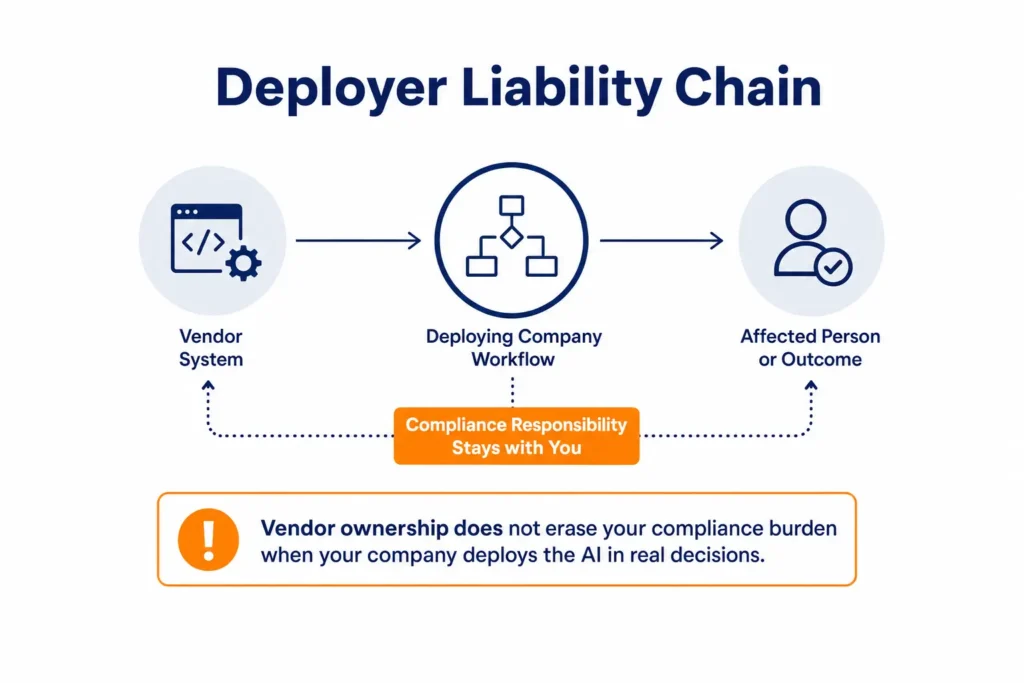

A second mistake is assuming vendor ownership equals vendor liability. It does not. If your company deploys the system, your company may still carry the compliance burden, even when the feature came from a third party. That means procurement, legal, and product teams need to ask harder questions before a contract is signed.

Common myths and reality

| Myth | Reality |

|---|---|

| We do not have an EU office, so the law does not apply. | Physical location is not the key issue. EU impact is. |

| We only use AI tools, we do not build them. | Deployers can still carry obligations, especially for high-risk use. |

| Regulators will only target big tech. | Mid-sized firms and early precedent cases are very likely targets. |

| Our vendor handles compliance. | Vendor compliance does not remove your deployer liability. |

The banned zone

Article 5 matters because it already applies. The prohibited list includes subliminal manipulation, exploitation of vulnerabilities, social scoring, certain criminality risk profiling, untargeted facial scraping, workplace and school emotion inference, biometric categorization of sensitive traits, and most real-time remote biometric identification in public spaces. These are not future obligations. They are active bans.

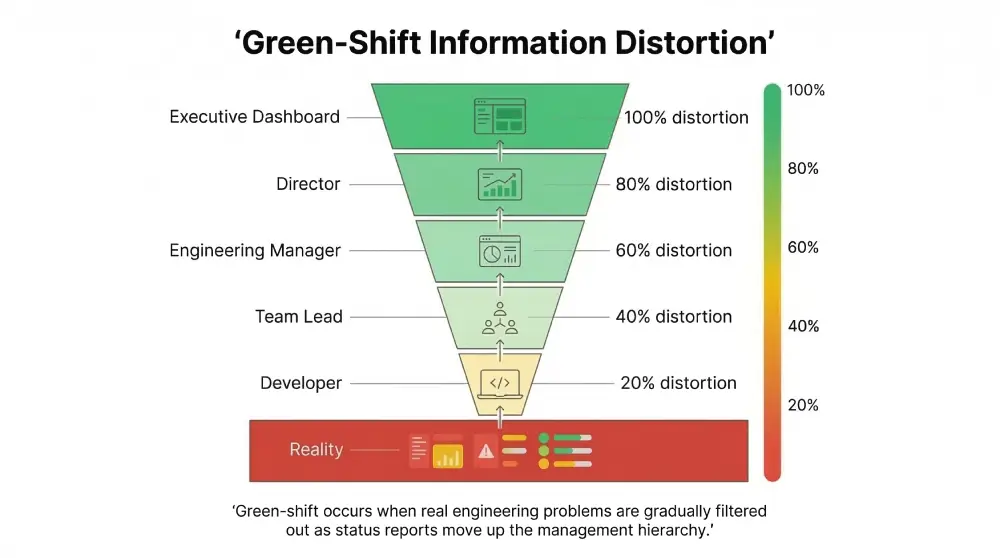

For CEOs, the main risk is not a cartoonishly obvious surveillance product. It is an ordinary business feature that quietly crosses the line. A workforce dashboard that infers stress from typing patterns, tone, or video behavior can become prohibited emotion recognition. A product team might frame it as wellbeing support, but the law looks at the function, not the intention.

Pro-tip: Treat anything involving emotion inference, biometric categorization, or facial scraping as a redline control. If there is doubt, remove the feature from EU-facing systems first.

High-risk is the real workload

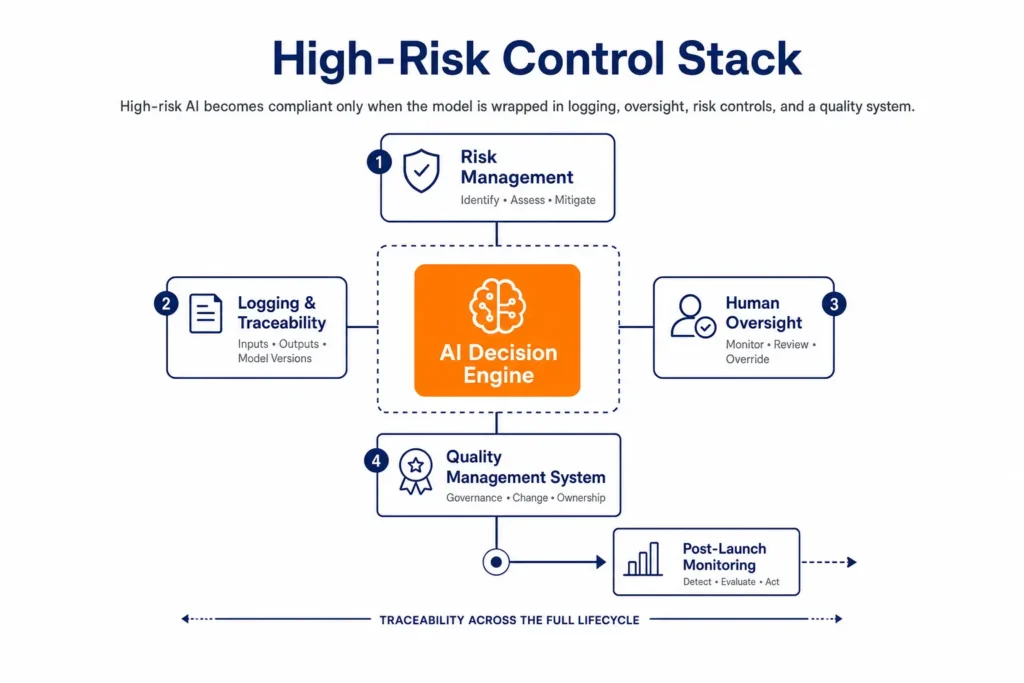

High-risk systems are where most serious enterprise AI lives. The Act lists use cases such as employment, worker management, biometrics, education, credit, critical infrastructure, and other systems that can affect rights or safety. These systems need more than policy language. They need an operational compliance stack.

Article 17 requires a documented quality management system. That system must include compliance strategy, design controls, testing, validation, data management, risk management, post-market monitoring, incident reporting, communications procedures, record-keeping, resource management, and accountability. In practice, this turns compliance into a standing engineering function rather than a one-time review.

What high-risk teams must build

| Requirement | What it means in practice |

|---|---|

| Quality management system | Written policies, controls, ownership, and change management |

| Risk management | Known and foreseeable risks must be identified and reduced throughout the lifecycle |

| Logging | Decisions, inputs, outputs, and model versions must be traceable |

| Human oversight | Humans must be able to monitor, understand, and override outputs |

| Incident response | Serious incidents need formal reporting and escalation paths |

| Post-market monitoring | Systems must be watched after launch, not just during testing |

The logging requirement is especially important. Article 12 requires systems to support automatic event recording over the system’s lifetime. That is not ordinary observability. It is forensic traceability. If your current stack cannot show which model version produced which decision using which data, you do not yet have a compliant architecture.

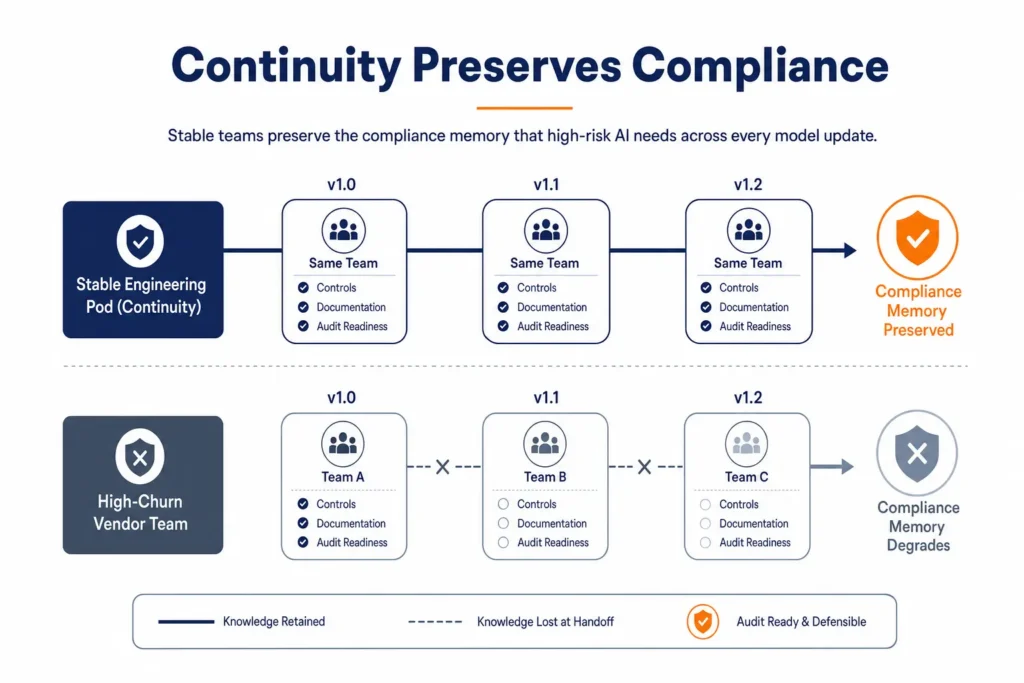

Why outsourcing model matters

For companies using outsourced engineering, compliance depends on team continuity as much as technical skill. A high-risk AI system needs people who understand why controls exist, not just how to deploy code. If key engineers leave often, institutional memory leaks out with them. That makes human oversight weaker, documentation less reliable, and compliance harder to prove.

That is why stable engineering pods matter. The research file argues that lower-turnover delivery environments, including Nepal-based pods, can be structurally better for long-lived compliance work than high-churn markets. The key point is not geography for its own sake. It is continuity, ownership, and the ability to preserve compliance knowledge across many update cycles.

Stop Managing. Start Shipping.

Stop fixing “outsourced” spaghetti code.

Deploy an ISO 27001-certified engineering pod that hits your internal linting standards and security benchmarks from Day 1.

Delivery model tradeoffs

| Model | Strength | Weak point | Compliance fit |

|---|---|---|---|

| Staff augmentation | Fast to start, flexible | Fragmented accountability and knowledge loss | Weak for long-lived high-risk systems |

| Managed services | Clearer ownership and process | Needs disciplined scope control | Stronger |

| BOT model | Can preserve knowledge and handoff structure | Requires mature governance | Strongest for stable compliance pods |

| High-churn vendor team | Fast scaling | Regulatory knowledge leak | Risky |

The cost equation

Waiting is expensive. The sources in the research show annual compliance cost for a single high-risk system can sit around 52,000 euros, while a non-compliance event can reach 15 million euros or more, depending on the violation. Retrofitting controls into a live system can cost roughly 35 percent more than building them in from the start. For a CEO, that means delay is not a neutral choice. It is a cost amplifier.

| Scenario | Typical cost profile | Business risk |

|---|---|---|

| Proactive sprint | Upfront engineering and governance work | Lower enforcement risk and smoother sales cycles |

| Late retrofit | Higher build cost, slower releases, more disruption | Higher chance of launch delays and compliance gaps |

| Prohibited use | Potential top-tier fine and forced removal | Existential risk for the feature or product |

Enterprise buyers will also start asking for evidence. Compliance is becoming part of procurement. That means a company that can show documentation, traceability, and clear controls may move faster in sales than one that cannot. In that sense, compliance is not just defensive. It is also market access.

What to do in 90 days

The fastest useful response is to create a clean internal map of AI use. Do not start with a policy memo. Start with an inventory. Once you know where AI exists, you can classify it and fix what matters most.

- Audit every AI system. List all in-house, third-party, and embedded systems in production, staging, and vendor tools. Include HR, customer analytics, content generation, fraud, and scheduling systems.

- Classify each system. Decide whether it is prohibited, high-risk, limited risk, or minimal risk. If a system profiles people or affects employment, credit, education, or health, treat it as high-risk until reviewed.

- Remove banned features. Shut off emotion inference, facial scraping, biometric categorization, and other redline features in EU-facing contexts.

- Build the control stack. Add QMS documentation, logging, human oversight, and incident response for high-risk systems.

- Lock down vendors. Put compliance duties into contracts. Do not rely on vendor assurances alone.

- Assign ownership. Name a compliance owner for each high-risk system. If nobody owns it, nobody controls it.

The CEO decision

The real question is not whether the EU AI Act matters. It does. The question is whether your company will discover its exposure early or during an audit, a procurement review, or an enforcement action. For mid-sized tech companies and non-EU founders selling into Europe, that timing difference can shape the product roadmap, the delivery model, and the sales cycle.

The best response is practical. Map the systems, remove the banned ones, classify the rest, and build the controls into your operating model. If your AI stack is serious, your compliance stack has to be serious too.

Stop Managing. Start Shipping.

Stop fixing “outsourced” spaghetti code.

Deploy an ISO 27001-certified engineering pod that hits your internal linting standards and security benchmarks from Day 1.